Large organizations don’t get many free passes on failed product bets. The budget cycles are longer, the approval chains are deeper, and a misfired initiative leaves a paper trail. MVP development for enterprises exists precisely to reduce that exposure by generating real evidence before major commitments are made. Organizations that follow this approach can reduce initial development costs by up to 45% while validating market fit and testing new ideas with significantly lower risk.

This guide walks through the full MVP development picture: from discovery and software engineering team structure, to cost benchmarks, AI-specific considerations, and what comes after the first version ships.

What is an MVP?

A minimum viable product (MVP) is the smallest functional version of a product built to test a specific business hypothesis with real users.

MVP is not a stripped-down final product. It’s a focused validation tool designed to answer critical questions:

- Does the problem matter enough for users to act?

- Does the solution change behavior?

- Is there a viable path to business value?

Unlike prototypes, which test isolated ideas, MVPs operate in real-world conditions and generate behavioral data that informs decisions.

The concept comes from Lean Startup thinking, popularized by Eric Ries and Steve Blank. Instead of committing to multi-phase delivery plans, teams introduce a narrowly scoped product into the market and measure what happens. Progress is defined by validated learning, not by feature count.

When teams build MVPs

MVPs typically follow the discovery phase, once the problem and a potential solution are defined, but still unproven. At this stage, product managers and designers have explored the problem space, done early research, and outlined a direction. What they haven’t done is put anything real in front of users.

The MVP marks the transition from concept to measurable reality. It allows teams to observe how users interact with a working solution rather than relying on predictions.

In enterprise environments, MVPs are usually deployed through controlled pilots, limited user cohorts, or a specific customer segment. This minimizes exposure while maximizing learning.

Benefits of MVP development

The most immediate benefit is shifting from opinion-based to evidence-based decisions. Instead of debating what users want, product leaders watch what they actually do.

Additional benefits include:

- Reduced risk: early validation prevents large-scale investment in unproven ideas

- Faster learning cycles: teams identify failures sooner and pivot earlier

- Cost efficiency: development focuses only on what is necessary to test the hypothesis

- Stronger alignment: clear data reduces internal disagreement

For enterprises, MVPs also counter a common structural issue: over-engineering. By enforcing strict prioritization, teams avoid building complexity before it’s justified.

MVP standards and quality

MVPs are not intentionally rough products. Experienced engineering teams treat them as focused but production-grade, and there’s a meaningful difference between “minimal” and “broken.”

A well-built MVP typically centers on three to five core features, often just one primary capability that delivers the clearest value. Everything else is deferred.

Core standards every MVP should meet:

- Value-focused: solves a specific problem for a defined audience

- Functional: works consistently in real conditions

- Instrumented: collects actionable user data

- Iterability-ready: can evolve without major rework

What doesn’t belong in an MVP:

- Advanced customization options

- Polished branding, complex animations, or extensive visual design

- Multiple complex user roles

- Enterprise-grade scalability features

In enterprise contexts, the temptation to over-polish before internal stakeholders see the product is real and consistently counterproductive. An over-engineered MVP generates debt instead of speed. The discipline is maintaining high execution standards while aggressively limiting what gets built.

MVP product vs. MVP business

Most teams think about an MVP as a product. Executives usually need to evaluate something broader in scope.

The distinction is worth making explicit:

MVP product: the simplest working version of a solution, built to test whether it functions as intended and whether users find it usable. The focus is on functionality and feature validation. A food delivery app that allows orders only from one restaurant type, testing whether the ordering flow works, is a classic example.

MVP business: the simplest experiment to test whether the entire business model is viable, often involving manual processes instead of software. A founder taking orders by text message and delivering food personally (before building any app) is testing something different: whether people want the service at all, whether they’ll pay for it, and whether the unit economics make sense.

The key differences:

| MVP product | MVP business | |

|---|---|---|

| Focus | Functionality and usability | Feasibility and willingness to pay |

| Question it answers | Does the solution work? | Should we build this at all? |

| When to use | Technology needs validation | Market demand is unproven |

| Typical format | Working software, prototype | Manual process, concierge, landing page |

Both are experiments that rely on real user feedback to decide whether to pivot or continue. The difference is what’s being tested.

A feature can drive heavy usage and still fail to generate revenue. A solution can address a genuine pain point and still require unsustainable acquisition costs. Leaders tend to evaluate both layers simultaneously, asking not just whether users engage but also whether the initiative aligns with broader business goals and can scale within existing structures. That’s why MVPs extend beyond the product itself, with teams running parallel tests on go-to-market strategies, internal processes, and operational constraints.

Enterprise MVPs vs. startup MVPs

The principle to validate before scaling stays the same across both contexts. The execution differs significantly.

Startup teams optimize ruthlessly for speed. They target early adopters, keep architecture intentionally lean, and treat the build as something to learn from and iterate on. When the hypothesis fails, they pivot without the weight of legacy systems or organizational approval chains. The risk they’re managing is market rejection before they find product-market fit.

Enterprise teams build to extend. Their MVPs need to integrate with existing ERP and CRM systems, meet security and compliance standards from day one, and survive scrutiny from legal, IT, and procurement before a single user touches them. The risk they’re managing is operational disruption — and reputational damage that outlasts any single product decision.

| Dimension | Startups | Enterprises |

|---|---|---|

| Primary goal | Validate core idea, attract investors | Validate operational feasibility, secure buy-in |

| Target audience | Early adopters, external customers | Internal stakeholders, B2B clients |

| Architecture | Monolithic, disposable, fast | API-first, secure, integration-ready |

| Pace | Fast, scrappy | Deliberate, governance-driven |

| Success metrics | Sign-ups, retention, revenue growth | Adoption rates, workflow efficiency, productivity gains |

| Primary risk | Market rejection | Compliance breach, operational disruption |

The architectural difference is particularly consequential. A startup can rebuild from scratch if the first version fails, and that’s often the plan. An enterprise team building on a throwaway foundation creates technical debt that compounds quickly once the product moves toward production. “Build it right” isn’t conservatism; it’s the cheaper long-term choice.

Pre-MVP planning and alignment

Before anyone writes code, alignment does the heavy lifting. Engineers, product managers, and executives need a shared view of what they’re testing and why it matters now. In enterprises, a VP of Product, a CTO, and multiple team leads may all shape direction, which slows decisions but raises the stakes. Weak alignment means shipping something that answers the wrong question. Treat this phase as a working agreement that the team actually uses when trade-offs come up mid-build.

Identify and validate customer pain points

Strong teams don’t start with the best features. They start with friction.

Product managers and designers go into the field — customer calls, sales conversations, support tickets — and look for patterns, not anecdotes. Engineers should stay close to this step. Developers who understand the problem firsthand make better implementation decisions later.

A practical approach: run 10–15 focused interviews, pull recent support and sales logs, and identify repeated breakdowns in workflows. But interviews alone aren’t enough. According to Forbes, teams should enumerate the jobs to be done — the self-contained user problems that are genuinely valuable to solve — rather than brainstorming feature lists and trimming from there. That shift from supply-side to demand-side thinking changes what gets built entirely.

The goal is to confirm the problem is painful enough that someone will change their behavior to solve it. Skip this, and the MVP becomes a technical exercise rather than a business one.

Research the market: build proto-personas, identify key competitors

Once the problem is clear, teams need context: who feels this pain most acutely, and what do those users already have available?

Designers and researchers typically draft proto-personas in a single session, not polished artifacts, but quick snapshots that keep engineers and product teams oriented around a specific role, goal, current workaround, and core frustration. Targeting “enterprise users” is too broad. Focusing on a single role inside a single workflow makes the MVP easier to design and validate.

At the same time, product teams map the competitive landscape, and this is where many companies fall into a trap. They list features instead of understanding positioning. Push deeper: why do customers choose one competitor over another? Where do users drop off or complain? Where does no one have a good answer yet? You’re not trying to outbuild competitors at this stage. You’re finding the narrow angle where the MVP can establish early credibility.

Understand your own constraints

Constraints shape products more than ideas do.

Engineering teams need clarity on what they can realistically deliver, available developers and designers, budgets, timelines, and system limitations. In enterprise environments, that list expands fast. Legacy systems, internal APIs, compliance reviews, and security requirements all come into play before the first feature ships.

Instead of resisting constraints, use them to prioritize ruthlessly. If integrating a legacy system takes six months, it doesn’t go in the MVP. When everyone sees the same boundaries, trade-off conversations get faster and less political.

This applies to organizational constraints as well. Talent gaps, budget approval cycles, and legal review timelines are just as real as any infrastructure limitation. Mapping them early prevents delays from surfacing at the worst possible moment.

Define your initial sales and marketing approach

Many teams treat go-to-market as something that comes after the product. That’s a mistake that costs real time and distorts what gets built.

The MVP needs a distribution path from day one. Who will use this first? How do they access it? What problem statement leads the conversation? In B2B contexts, that might mean piloting with existing clients; for an internal platform team, it might mean starting with a single department. This decision shapes product scope directly. If first users arrive through sales demos, the product needs to support demos. If through self-serve, onboarding becomes critical from the start.

One thing to keep in mind: the MVP launch isn’t a marketing moment; it’s a learning moment. Broad campaigns generate noise. Targeted outreach to a defined user segment — or a waitlist seeded through a direct relationship — generates a signal. Save performance marketing budgets for when the product has demonstrated retention, and you’re ready to scale.

Align on the initial definition of success, metrics, and decision thresholds

If stakeholders don’t agree on what success looks like before the MVP launches, they’ll disagree on how to interpret the results afterward.

Product leaders should define a small set of metrics tied directly to the problem being solved. Activation, engagement, and retention are usually the right starting points. But metrics alone don’t drive decisions — thresholds do. The team needs to agree in advance on what each outcome means for the path forward. For example:

- If 40% of users complete the core action, expand development

- If engagement stalls below 15%, revisit the solution

- If retention drops after the first use, redesign onboarding

This turns the MVP into a decision tool:

- Engineers and designers know what they’re optimizing for.

- Executives know how to interpret outcomes without relitigating the brief.

One discipline that consistently pays off: define a single primary success metric tied to the core user problem. Teams that track too many metrics at once tend to cherry-pick the numbers that confirm what they already believed. A single north star metric, agreed upon before launch, keeps interpretation honest and decisions faster.

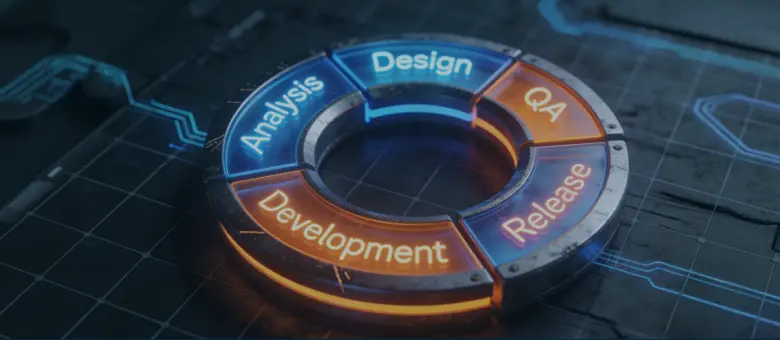

The MVP development process

Product teams that ship MVPs successfully almost always work in agile cycles. Instead of committing to a multi-month delivery plan and hoping the assumptions hold, they build in short sprints, expose the work to real users, and adjust based on what they observe. For enterprises specifically, this software development methodology matters more than it does at startups: the longer a team goes without external feedback, the more organizational momentum builds behind ideas that may not survive contact with actual users. Scrum and Kanban both work well here; the specific framework matters less than the discipline of treating each sprint as a learning cycle, not just a delivery cycle.

Define the problem and business case

Before writing a line of code, connect the problem to a concrete business outcome: revenue, retention, or operational efficiency. If that link is vague going in, the team will fill the gap with assumptions, and those assumptions will shape every decision that follows.

In-depth market research and personas

Go beyond demographics. Map the specific workflow where your target user currently struggles, what workaround they use today, and why that workaround falls short. A proto-persona built around a real friction point is more useful than a polished one built around demographic averages.

Map user journeys

Sketch the steps a user takes from encountering the problem to resolving it, then cut every step the MVP doesn’t strictly require. This exercise almost always reveals that the product needs to do less than the team originally planned, which is a good outcome.

Define and prioritize features

Apply the MoSCoW method — Must-have, Should-have, Could-have, Won’t-have — and be ruthless about what earns “Must.” If removing a feature doesn’t change what the team learns from the experiment, it doesn’t belong in the build.

Choose MVP type and approach

Not every MVP needs to be working software. A concierge MVP, where a human performs the service manually while the user experiences a clean front end, can validate demand in days rather than weeks. Match the format to the question: if you’re testing feasibility, build something. If you’re testing demand, you might not need to build anything at all.

Select tools and tech stack

Optimize for speed within your constraints, not for maximum scalability on day one. That said, enterprises can’t build on a throwaway foundation the way startups sometimes do. Modular architecture and clean API boundaries from the start cost very little extra time upfront and prevent expensive rewrites when the product moves toward production.

Design, prototype, and validate concepts

Run a clickable prototype past real users before development starts. One round of usability testing at this stage routinely surfaces issues that would have taken weeks to uncover in code, and fixing a Figma file takes an hour.

Develop the MVP

Build iteratively in two-week sprints, keep scope locked within each cycle, and resist the pull to add features mid-sprint based on stakeholder enthusiasm. The guiding question throughout is the same: are we building just enough to learn?

Integrate with existing systems

Enterprise MVPs don’t launch in isolation. Map your integration dependencies early and separate the blockers from the nice-to-haves. Where full integration isn’t feasible at the MVP stage, stub or simulate the connection to maintain momentum without creating technical debt that’s hard to unwind later.

Launch with controlled user groups

Start with a small, defined cohort: internal users, a pilot customer, or a single department. A controlled launch surfaces real issues without reputational exposure, and the feedback from 50 engaged users is more actionable than aggregate data from 5,000 passive ones.

Measure performance and feedback

Track a small number of metrics tied directly to your success thresholds, set before launch. Qualitative feedback from user interviews and quantitative data from behavioral analytics answer different questions; you need both to understand not just what users are doing, but why.

Iterate and improve

The MVP is a starting point for decision-making, not a finished product. Based on what the data shows, the team either perseveres (the hypothesis held, now built on it) or pivots (something fundamental needs to change). The one unacceptable outcome is continuing to build in the same direction while ignoring what users are telling you.

MVP frameworks

Build-Measure-Learn cycle. This is the engine of Lean Startup methodology. Build the simplest possible version that addresses a core hypothesis. Release it to real users and collect data focused on actionable metrics (e.g., retention, task completion). Then analyze and decide: pivot or persevere.

Prioritization matrices. Three common approaches help teams filter features before a build:

- Value vs. Effort Matrix — features mapped on a 2×2 grid by value delivered and effort required. High-value, low-effort features go first.

- MoSCoW method — features are generally categorized by their necessity and impact. Effective for managing tight budgets and stakeholder expectations.

- Impact, Effort, and Risk Matrix — evaluates features on potential user impact, development effort, and risk level.

Collaborative tools. Modern teams rely on collaborative ecosystems:

- Design tools for prototyping and testing

- Project management tools for sprint execution

- AI-assisted development tools for accelerating delivery

Types of MVPs

Not all MVPs look like products. The right format depends on what a team needs to learn and how much it can afford to build to learn it.

Low-fidelity MVPs — wireframes, paper sketches, landing pages — test user interest before a single line of code gets written. They’re fast and cheap, but the signal is limited: you learn whether people are curious, not whether they’ll actually use something.

High-fidelity MVPs are functional versions that closely resemble the final product. They require more resources but generate richer behavioral data — how users actually navigate, where they drop off, what they ignore.

Landing page MVP. A single-page site that presents the value proposition with a clear call-to-action — sign up, pre-order, learn more. It’s fast, low-cost, and surprisingly informative.

Pre-order MVP. Customers pay or commit before the product ships. This goes further than measuring interest — it validates willingness to actually spend money. For hardware companies or complex software products, pre-orders can simultaneously prove demand and fund production.

Video MVP. It communicates core ideas and features, often acting as a cost-effective alternative to building a functional prototype. Examples include explainer videos, animated demos, or walkthroughs of an even non-functional product prototype.

Concierge MVP. The service runs manually behind the scenes for a small group of users. The goal is to learn exactly what steps are required to solve the problem before automating any of them.

Wizard of Oz MVP. Similar to the concierge approach, except users believe they’re interacting with an automated system. The front end looks functional; humans handle everything behind it. This lets teams simulate a complete product experience while keeping infrastructure costs near zero.Single-feature MVP. It concentrates on solving one problem exceptionally well rather than offering a full-featured yet mediocre product. This allows teams to test desirability, viability, and feasibility with real customers in 2–3 weeks.

Successful MVP examples

The most successful products in tech history started as something much smaller than what they became. In most cases, the founders had no idea how big things would get — they were just trying to answer one question cheaply and quickly. What follows are real examples of that process, verified against primary and authoritative sources.

Airbnb. In 2007, Brian Chesky and Joe Gebbia couldn’t pay their rent on their San Francisco apartment and noticed that conference attendees had no affordable place to stay during a sold-out design event. They put air mattresses on their living room floor, built a basic website called “Air Bed & Breakfast,” and rented to three guests at $80 per night. The site had no date selection, no multiple listings, no reviews — just one apartment, one conference, one question: will strangers pay to sleep in someone’s home? That answer was yes, and everything else was built on top of it.

Spotify. Daniel Ek and Martin Lorentzon founded Spotify in Stockholm in 2006, spending two years in closed beta before a public launch in October 2008. The entire engineering focus of those two years was on a single technical problem: playback latency. As Ek later said, “We spent an insane amount of time focusing on latency when no one cared because we were hell-bent on making it feel like you had all the world’s music on your hard drive.” The MVP was a desktop-only app with a limited, licensed music library in Sweden. No mobile app, no social features, no podcast layer — just the core proof that streaming could feel faster than piracy.

Dropbox. In 2007, Drew Houston had a working prototype that ran only on Windows, was far from stable, and could support only a handful of users. Rather than keep building, he recorded a three-minute demo video showing how seamless file syncing across devices could work, and posted it to Hacker News as part of his Y Combinator application. The waiting list for the private beta jumped from 5,000 to 75,000 overnight. The video proved that the demand existed before the product did — and that the right MVP sometimes requires no code at all.

Amazon. Jeff Bezos started Amazon from his garage in Bellevue, Washington, in 1994, launching publicly in July 1995 as an online bookstore with 925 titles. He chose books deliberately: they were standardized, easy to ship, and existed in such volume that no physical store could compete on selection. The first version of the site had no marketplace, no third-party sellers, no recommendation engine, and no AWS. Bezos himself has described it as the “Kitty Hawk stage” of e-commerce — proof that people would buy something online before expanding into everything else.

Uber. Travis Kalanick and Garrett Camp launched UberCab in San Francisco in 2010 as a black-car service available via SMS or a basic iPhone app. The fleet was small, pricing was fixed, and access was invite-only — you had to email one of the founders to get in. There was no dynamic pricing, no driver ratings, no UberX, no Uber Eats. The one question being tested was whether someone would request and pay for a ride through an app rather than hailing a cab on the street. Consistent early usage answered it, and the platform was built out from there.

Figma. The product was launched in 2016 after four years of development centered almost entirely on one capability: multiple designers editing the same file simultaneously in a browser. Early versions were missing features that designers expected to be standard, but the collaborative workflow was real, and it worked. That single differentiator solved a genuine pain point in design team coordination — and it was enough to drive adoption despite the gaps. The rest of the product was built incrementally once that core value proposition was validated.

Zapier. When Zapier launched in 2011, the founders manually executed app integrations for early users, running workflows by hand behind the scenes to simulate what the automation engine would eventually do. The point was not to build infrastructure; it was to confirm that connecting tools across different services was a problem people would pay to solve. Once demand was proven through that concierge approach, they built the automation layer on top of validated need rather than assumption.

Buffer. In 2010, founder Joel Gascoigne built a two-page website before writing any product code. The first page described the concept of scheduled social media posting; the second showed a pricing page. Users who clicked to upgrade saw a message saying the product wasn’t ready yet. The conversion rate on that flow was enough to prove willingness to pay. Gascoigne built the actual product only after the landing page validated that the problem was worth solving and that people would open their wallets for the solution.

Instagram. Its predecessor, Burbn, launched in 2010 as a location-based check-in app with photo sharing, plans, and social features layered on top. Usage data told a clear story: users ignored almost everything except the photos. Founders Kevin Systrom and Mike Krieger stripped the app down, rebuilt it around photo sharing and filters, and relaunched it as Instagram. Within 24 hours of launch, 25,000 users had signed up. The pivot wasn’t a failure — it was the MVP doing exactly what it’s supposed to: revealing what users actually want.

Netflix. Before building anything, Reed Hastings and Marc Randolph tested one assumption: could a DVD survive being mailed? They put a disc in an envelope and mailed it to Hastings’s house. It arrived intact. That was enough to launch a website in April 1998 with 925 titles available for individual rental by mail. There was no subscription model yet, no streaming, no original content. The subscription model came in 1999; streaming arrived in 2007 — nearly a decade after the first envelope was dropped in a mailbox.

MVP cost and optimization

Budget is where many promising MVPs stall. Teams either underestimate the cost of a functional product and run out of runway, or overspend by building too much before validating anything. Getting the numbers right starts with understanding what actually drives the price.

MVP cost ranges

In 2026, MVP development costs typically range from $15,000 to $120,000, with most market-ready products falling in the $40,000–$80,000 range. The spread is wide because “MVP” can mean very different things depending on the product and the team building it.

A rough breakdown by complexity:

| MVP type | Cost range | What it typically covers |

|---|---|---|

| Simple | $5,000–$15,000 | Basic functionality, minimal design, single platform |

| Mid-range | $15,000–$50,000 | Core features, standard integrations, polished UI |

| Complex | $40,000–$120,000+ | Custom features, high security, multiple platforms |

At the phase level, the largest budget items are usually backend infrastructure ($20,000–$45,000), frontend development ($15,000–$35,000), and UI/UX design ($8,000–$15,000). These numbers shift significantly based on where the development team is located and how much of the work involves custom versus off-the-shelf solutions.

Key cost factors

Feature scope. Development time is the primary cost driver, and feature scope determines development time. Every addition, even a seemingly minor one, carries design, engineering, testing, and integration costs. Teams that scope aggressively at the start spend less and learn faster.

Team location and rates. A senior developer in North America or Western Europe typically bills at $100–$150 per hour. Comparable talent in Eastern Europe runs $40–$70, and Latin America sits in a similar range. For a 2,000-hour project, that gap can mean $60,000–$200,000 in labor costs alone. Many enterprise teams and funded startups use a hybrid model — keeping product and design onshore while outsourcing engineering to cost-effective regions.

Platform choices. Building separate native apps for iOS and Android roughly doubles mobile development effort. Cross-platform frameworks like React Native let teams share most of their codebase across platforms, meaningfully reducing costs without sacrificing much in user experience for most MVP use cases.

Backend complexity. Real-time databases, AI features, and multi-system integrations are expensive to build correctly. If the MVP doesn’t require real-time data or complex automation to validate its core hypothesis, those elements should wait.

Additional cost considerations

Post-launch costs are the budget line most teams underestimate. Plan for ongoing maintenance at roughly 20% of the initial development cost annually, covering updates, bug fixes, compatibility patches, and server infrastructure. For a $60,000 MVP, that’s around $12,000 per year before any new feature development.

Quality assurance deserves a meaningful share of the budget from the start. Cutting QA to save money typically costs more later when production issues erode user trust, especially in enterprise contexts where a single bad experience can end a pilot program.

Project management is another area where early investment pays off. Agile delivery with clearly defined sprint goals reduces rework and keeps engineers focused on what matters. The overhead is real, but so is the cost of building the wrong thing.

Cost optimization strategies

Scope to the hypothesis, not the vision. The MVP should answer one core question. Every feature that doesn’t contribute to that answer lives in the backlog for later.

Build iteratively. Short development cycles (typically two weeks) let teams catch misalignments early, before they compound. Engineers course-correct on paper rather than in production code.

Outsource selectively. A cost-efficient engineering team in Eastern Europe or Latin America can deliver strong results, but the engagement needs structure: clear specs, defined milestones, and a product manager who can bridge the communication gap. Outsourcing without those guardrails often costs more in rework than it saves in rates.

Leverage open source and existing APIs. Payment processing, authentication, maps, notifications — most of these problems are already solved. Building them from scratch is rarely justified at the MVP stage. Using established libraries and third-party services keeps the team focused on what’s actually differentiated.

Consider no-code for the simplest validations. For landing page MVPs, early demand tests, or internal workflow tools, platforms like Webflow, Bubble, or Glide can deliver a functional product in days rather than weeks. The limitation is scale — but at the validation stage, scale isn’t the goal.

The teams that manage MVP budgets well tend to share one habit: they treat every engineering dollar as a question. What assumption does this build answer? If the answer isn’t clear, the feature waits.

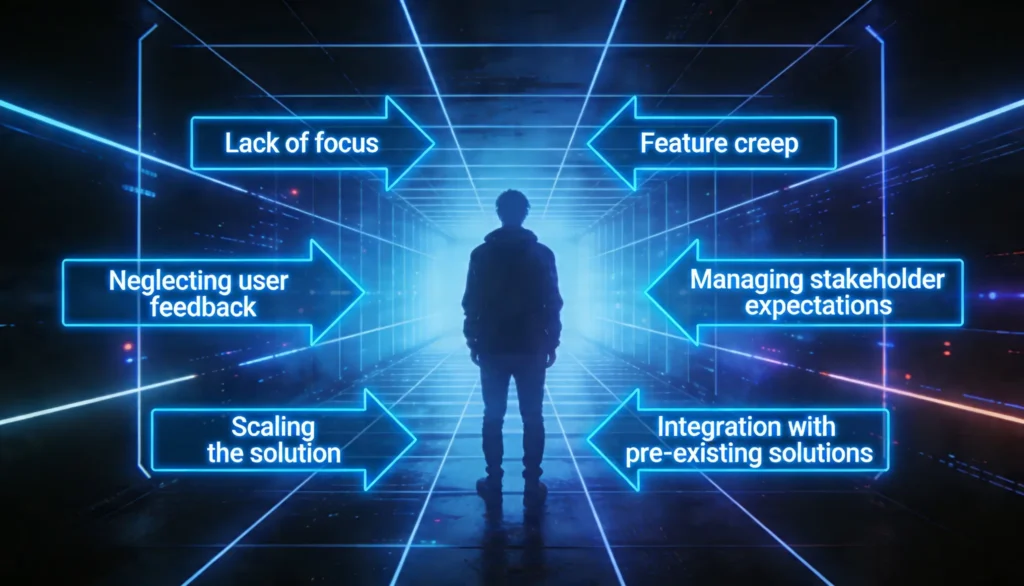

Common MVP challenges

Building an MVP sounds straightforward in theory — ship the smallest thing that tests your core assumption, learn fast, and iterate. In practice, teams consistently run into the same walls. The challenges below are especially acute for enterprise teams, where organizational complexity adds friction at every stage. Drawing on McKinsey research, only 24% of incumbents’ new ventures become viable companies, a sobering reminder that the build process itself is where most value is won or lost.

Lack of focus

The most common failure mode isn’t technical; it’s definitional. Teams that can’t articulate exactly what assumption the MVP is testing end up building a lightweight version of a full product rather than a targeted experiment. The output looks like a product but functions like a guess.

Before a line of code is written, the team needs a single, specific answer: what decision will this MVP help us make? Incumbents are especially prone to skipping this step. Their culture often biases them toward planning comprehensiveness over hypothesis clarity, mapping out full product roadmaps before validating whether customers even want the product.

Feature creep

Scope expands for predictable reasons: stakeholders advocate for their priorities, designers spot opportunities, and engineers see adjacent problems worth solving. Each addition feels justified in isolation. Collectively, they delay launch, inflate cost, and dilute the value proposition.

The discipline isn’t saying no permanently; it’s saying not yet, and enforcing that boundary until the core hypothesis is validated. Smart teams treat their MVP backlog as a research queue: every unbuilt feature is a future test, not a casualty.

Perfectionism

Shipping something unpolished feels risky. In practice, waiting until the product feels “ready” is the greater risk, because it delays the feedback that would tell the team whether they’re building the right thing at all. An MVP that launches six months late has already lost its most valuable asset: time to learn.

The antidote is reframing what “done” means. An MVP isn’t an unfinished product; it’s a complete experiment. The goal isn’t polish; it’s signal. Teams that internalize this ship faster and learn more.

Neglecting user feedback

Building a feedback loop is straightforward. Actually using it is harder. Teams collect data, then continue building what they planned to build anyway. Roughly 42% of startups fail because they misread market needs, not because they lacked data, but because they didn’t act on it.

Feedback only has value when it’s connected to product decisions with clear timelines. The best teams treat customer input as a continuous input to the roadmap, not a post-launch report card.

Managing stakeholder expectations

This challenge hits enterprises far harder than startups. A founder can make a call in a day. A VP of Product at a large company may need sign-off from finance, legal, IT security, and executive leadership before moving forward. Each approval layer adds latency, and each stakeholder brings a different definition of success, a different risk tolerance, and often a different understanding of what an MVP actually is.

The deeper issue is cultural. Enterprise stakeholders are accustomed to finished products. Presenting a deliberately minimal build as a strategic choice requires active framing from the start. Product leaders who invest in stakeholder education early, setting explicit expectations about what the MVP will and won’t do, spend far less time defending the approach mid-build. The goal is to turn decision-makers from gatekeepers into aligned sponsors.

Scaling the solution

Building for scale on day one is expensive and slow. Not building for it at all creates a different kind of expensive problem later, when the product is gaining traction and can least afford a rewrite.

For enterprise teams, this tension is sharper than at startups. Every system built inside a large organization will eventually need to scale, integrate with other platforms, and meet compliance requirements that weren’t top of mind at the MVP stage. The practical answer is architectural discipline without over-engineering: modular design, cloud-native infrastructure, and clean API boundaries from the start. These choices don’t meaningfully slow down MVP delivery, but they create a foundation that doesn’t collapse under production load or under the weight of the organization’s own governance processes.

Integration with pre-existing solutions

Startups build on a blank slate, enterprises don’t. Most large organizations run a sprawling mix of legacy systems, modern SaaS tools, custom-built platforms, and an increasingly wide range of low-code and no-code solutions adopted by different teams at different times. Any new product has to coexist with all of them.

This creates integration complexity that’s easy to underestimate at the MVP stage. A new customer-facing tool may need to connect to a CRM built a decade ago, a billing system with limited API access, and a data warehouse owned by a team with its own release schedule. Low-code tools add another wrinkle: they often sit outside standard IT governance, meaning the data flows and dependencies they create aren’t always documented or even visible.

The practical implication is that enterprise MVP teams need to map their integration landscape early, not to solve every connection upfront, but to understand which dependencies are blockers versus nice-to-haves. Building with clean interfaces and avoiding tight coupling buys options later, when the full integration picture becomes clearer.

Post-MVP evolution

A successful MVP answers a question. What comes next is building a business around that answer.

Once the core hypothesis holds — users engage, the problem is real, the behavior changes — the product needs to grow beyond its experimental state. That means moving from “does this work?” to “can this scale, generate revenue, and retain users?” The path forward typically runs through two intermediate stages before reaching full product maturity.

| MVP | MMP | MLP | |

|---|---|---|---|

| Purpose | Validate a core hypothesis | Deliver reliable value to paying users | Create an emotional connection and preference |

| Quality bar | Functional enough to learn from | Stable, consistent, supportable | Intuitive, friction-free, rewarding |

| Primary metric | Evidence that justifies the next step | Revenue, repeat usage, low support friction | Retention, referrals, and user advocacy |

| Who it’s for | Early testers and pilot users | First real customers | Users already showing traction |

| Key risk | Overbuilding, wrong hypothesis | Launching before it’s truly reliable | Mistaking visual polish for experience quality |

| Comes after | Discovery and alignment | MVP validation | MMP traction |

Minimum marketable product (MMP)

The MMP is the first version that real customers can rely on and pay for. Engineers building one are no longer running an experiment — they’re delivering a service, which significantly changes the quality bar. Onboarding must work without hand-holding, performance must hold under real-world conditions, and pricing and support paths must exist.

Minimum lovable product (MLP)

An MLP solves a problem and makes users feel something about it. What makes a product lovable isn’t visual polish — it’s friction elimination: the workflow that takes three steps instead of eight, the moment the product anticipates what a user needs next. Teams that treat the MLP as a cosmetic upgrade miss the point. Emotional connection comes from how well the product supports what users are trying to accomplish.

Pivot vs. persevere

After the MVP, leadership has to make the call the whole exercise was designed to support. Persevering means holding to the core hypothesis: users engaged as predicted, metrics hit the thresholds set before launch, and the path forward is clear. Pivoting means the data revealed something the team’s assumptions missed: the wrong audience, a flawed value proposition, or a monetization model that doesn’t align with user behavior. A pivot isn’t a failure; it’s the MVP doing its job. The critical discipline is making the call based on data, not on how much the team has already invested — sunk cost thinking is what turns a useful pivot into an expensive death march.

Enterprise procurement and vendor selection

When to outsource MVP development

Outsourcing makes sense in a few specific situations: the internal team lacks the specialized skills the MVP requires, time-to-market pressure is real, or hiring full-time engineers for a short-term validation project doesn’t make financial sense. For founders focused on fundraising and early customer development, handing off the build to an experienced external team lets them stay focused on what actually moves the needle.

The calculus changes when the technology itself is the competitive advantage. If the MVP is built around a novel algorithm or proprietary approach, keeping development in-house protects the IP and maintains tighter control over the core asset. The same applies when a capable internal tech team already exists — outside that scenario, outsourcing is often the faster, leaner path.

A few practices separate successful outsourcing engagements from costly ones:

- Choose a partner that offers strategic input, not just execution.

- Keep at least one internal technical person in the loop to guide decisions and maintain quality standards.

- Define scope clearly before development starts.

Evaluating agencies vs. in-house

The core trade-off is between speed and flexibility on the one hand, and control and continuity on the other. Agencies bring ready-to-deploy teams, proven frameworks, and the ability to scale effort up or down without the disruption of hiring or layoffs. In-house teams build deeper product context over time and are generally better suited for products that will require sustained, long-term development after the MVP stage.

For most early-stage companies, validating a single hypothesis on a defined timeline, an agency partnership is the more practical choice. The key is finding the right one — evaluating not just technical capability but process maturity, communication standards, and cultural fit.

Check our guide for a deeper look at how to choose the right software development partner for your business in 2026 and beyond.

MVP development for AI products

Building an MVP for an AI product follows the same validation logic as any other — test the core hypothesis before scaling investment. But the technical and ethical complexity is meaningfully higher. Shipping a broken feature in a standard product is a bug. Shipping a biased or unreliable AI model erodes trust in ways that are much harder to repair.

Data readiness

For AI products, data is the product. Teams need to start by collecting data that directly maps to the problem they are solving, then clean it rigorously before any training begins. Skipped here, structural issues compound quickly and invalidate everything built on top of them.

Two practices that often get deferred to later stages shouldn’t be: data governance and privacy. A data contract — a formal agreement on how data is structured and validated — ensures consistency as the system scales. Anonymization and encrypted storage aren’t features to add post-launch; in regulated industries, they’re preconditions for launch.

Model validation

The goal isn’t a perfect model — it’s proof that the AI delivers results worth building on. Accuracy alone rarely tells that story. Depending on the use case, precision, recall, or user-centric measures such as task completion time are more reliable indicators of actual performance.

The most underused validation step is benchmarking against a simpler baseline — a rule-based system or existing manual process. If the AI doesn’t meaningfully outperform the alternative, the MVP hasn’t made its case. Once real users interact with the product, their behavior becomes the most valuable training signal, so feedback loops should be built in from day one.

Ethical and compliance considerations

In 2026, the EU AI Act, GDPR, HIPAA, and a growing set of sector-specific frameworks mean compliance shapes architecture decisions from the start, not after. Bias audits on training data, explainability requirements, and human-in-the-loop mechanisms for high-stakes decisions are no longer optional considerations — procurement teams and legal departments in enterprise contexts actively screen for them.

Black-box models face the highest friction. Where possible, interpretability should be a design requirement, not a documentation afterthought.

For teams building AI products and looking to move faster without assembling the entire stack from scratch, AgileEngine’s AI Studio covers the tooling, expertise, and process infrastructure that AI-focused MVPs typically require.

Conclusion

Most enterprise product initiatives don’t fail because the technology was wrong. They fail because the organization committed too early, too fully, to an idea that hadn’t yet earned that commitment.

What makes MVP development hard is the discipline to stay within the scope you defined, the courage to ship before the product feels ready, and the organizational alignment to treat a minimal build as a strategic choice rather than a shortcut. Those are leadership problems as much as product ones.

The companies that do this well succeed because they’re honest about what they don’t yet know and methodical about finding out. That approach scales to any organization willing to apply it.

FAQ

A proof of concept answers a technical question: can this be built? An MVP answers a business question: will people use it? POCs stay internal — engineers use them to validate feasibility. MVPs go in front of real users to validate demand and behavior. You might run a POC to confirm a particular AI model performs well enough on your data before building a product around it.

Most MVPs take 8 to 16 weeks from the time scoped requirements are defined to launch. Simpler builds can ship in four to six weeks; products involving complex backend infrastructure or regulated industries typically run three to five months. The variable that derails timelines most often isn’t technical complexity — it’s unclear scope going into development.

The goal at the MVP stage is signal quality. Broad campaigns generate noise; targeted ones generate learning. Direct outreach to a defined user segment, waitlists, and existing communities tends to produce the best feedback. In B2B, sales-led pilots with a handful of design partners outperform any paid acquisition channel. Save performance marketing budgets for when the product has demonstrated retention, and you’re ready to scale.

Yes, and for many companies it’s the right call, especially when speed matters or hiring full-time engineers for a short-term project doesn’t make financial sense. The main risk is misalignment, not quality. Outsourced teams execute well when the scope is clear and a technical stakeholder on the client side maintains oversight.

![A smartphone and a tablet with monetization elements (banner ad and in-app purchase button) displayed on the screen]](https://agileengine.com/wp-content/uploads/2026/03/How-Do-Free-Apps-Make-Money_-Monetization-Explained.webp)