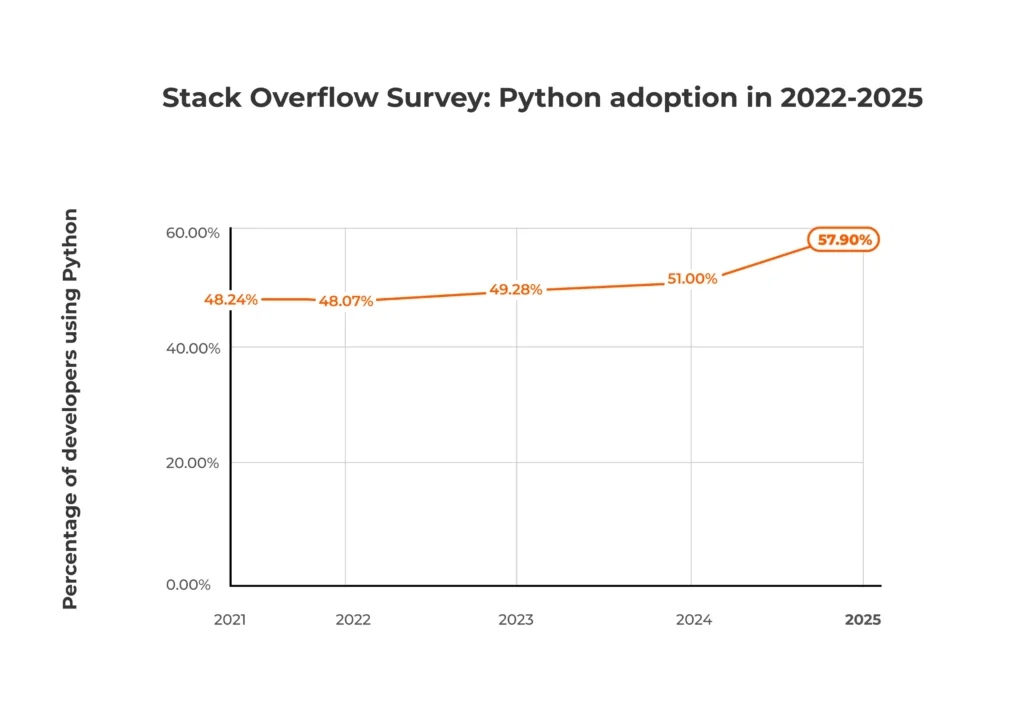

Have you noticed how much Python has grown in popularity since GenAI became mainstream? According to the Stack Overflow Developer Survey, the language gained nearly 10 percentage points between 2021 and 2025. That surge reflects how many developers and data teams now treat Python as the default choice for AI development and data science.

Python popularity chart covering the period from 2021 to 2025 and showing growth from 48.2% to 57.9%

But Python isn’t the only language behind modern AI initiatives — and in some cases, it isn’t even the best option. Engineering teams often choose languages based on factors such as library ecosystems, performance requirements, talent availability, and the ease with which models integrate into production systems. Industry context matters too: a robotics startup, a pharmaceutical research team, and a large enterprise building recommendation engines may all make very different technology choices.

In this guide, you’ll learn which programming languages power modern AI systems, where each one fits in the development stack, and how companies and industries actually use them. We’ll also look at the platforms and tools that help teams turn AI experiments into real products.

AI programming languages selection and use in real life

When companies start building AI systems, they quickly discover there isn’t a single AI programming language. Most projects rely on multiple options, each suited to a different stage of development. Data scientists may prototype models in one language, engineers optimize them in another, and backend teams deploy them using something else.

In practice, modern AI development typically involves three layers of work:

- Data preparation — loading, cleaning, and transforming datasets

- Model development — training machine learning or deep learning models

- Deployment and integration — embedding models into applications, APIs, or cloud services

Different languages tend to dominate each stage.

For example, Python is widely used for building and experimenting with AI models, especially during research and prototyping. However, Python isn’t always the language used to run AI systems in production. Performance-critical components are often written in C++ (or increasingly Rust) because it provides precise control over memory and hardware. Backend teams then use Java, Scala, Go, or Node.js to integrate AI models into enterprise platforms and large-scale backend services. A horizontal illustration depicting a flow of programming languages in AI development:

The result is what many engineering teams call a polyglot AI stack: multiple languages working together across research, optimization, and deployment layers to balance development speed, system performance, and long-term maintainability.

What makes a good programming language for AI?

Choosing a programming language for AI development isn’t just a technical decision. It often affects how quickly teams can hire developers, build models, and run AI systems at scale. Engineering leaders typically evaluate languages across several practical criteria.

Ecosystem and libraries

One of the biggest factors is the availability of mature frameworks and libraries. Strong ecosystems allow teams to move faster because developers can rely on proven tools instead of building everything from scratch.

Performance and scalability

If you remember Sam Altman talking about “melting GPUs” at OpenAI, you know that AI workloads can be extremely computationally intensive. This is why performance-focused languages frequently appear in the lower layers of AI systems — especially in components that must handle high throughput or real-time inference.

Talent availability

In practice, the “best” language isn’t always the most technically elegant one — sometimes it’s simply the one you can hire for most easily. Data from the Stack Overflow Developer Survey clearly illustrates this gap. Roughly half of developers report using Python (57.9%), while languages like R or Lisp have much smaller communities (4.9% and 2.4% respectively).

Integration with existing systems

AI models rarely live in isolation — they usually need to connect with databases, APIs, and production applications. As a result, organizations typically favor languages that integrate easily with their existing infrastructure. If your backend systems rely on JVM technologies, for example, adopting languages that work well in that ecosystem can simplify deployment and operations.

Development speed and maintainability

In programming languages, there’s often an inverse correlation between developer productivity and rapid experimentation on the one hand, and safety, performance, and strict correctness on the other. For example, Rust is widely praised for its performance and memory safety. Still, a strict compiler and a complex ownership model can often lead to longer development cycles compared with languages like Python.

Top programming languages for AI development

Several programming languages play important roles in modern AI projects. Some languages are widely used across AI development, including Python, C++, and Java. Others — such as Julia, Lisp, or Prolog — tend to appear in more specialized domains, such as scientific computing or symbolic reasoning.

The following section focuses on the languages most commonly used in modern AI systems. We’ll explore several niche and domain-specific languages later in the article.

Python

Python has become the central language for tasks such as dataset exploration, training AI and machine learning models, and rapid prototyping.

One reason for this dominance is the language’s accessibility. Python’s readable syntax and high-level nature allow data scientists, engineers, and researchers to collaborate more easily across disciplines. This has helped the language become a shared foundation across AI research labs, startups, and enterprise engineering teams.

Popular Python frameworks and libraries for AI/ML

Python supports many of the most widely used AI frameworks and data science tools:

- Foundation models and deep learning: PyTorch, Scikit-learn, TensorFlow, Keras, JAX

- Generative AI and agents: Hugging Face Transformers, LangChain, CrewAI, LlamaIndex

- Data processing and infrastructure: Pandas, NumPy, FastAPI, Streamlit

- Data visualization: Matplotlib, Seaborn

While some of these technologies are not exclusive to Python (for example, TensorFlow or Hugging Face Transformers), Python typically receives first-class support and remains the primary interface for many developers.

Common AI use cases for Python

Python covers a wide range of AI use cases, including:

- machine learning and deep learning

- natural language processing

- computer vision systems

- recommendation engines

- AI experimentation and prototyping

Because of this versatility, Python often serves as the starting point for many AI initiatives.

C++

C++ is the language of choice for performance-critical parts of AI systems. While many developers train and prototype models using higher-level languages, the underlying infrastructure often relies on C++ to handle intensive computations efficiently.

The language offers precise control over memory and hardware resources, which is particularly valuable when building systems that must process large volumes of data or deliver real-time responses.

Popular C++ frameworks, libraries, and platforms for AI/ML

C++ often powers the lower layers of major AI frameworks and high-performance computing tools:

- Foundation and deep learning: PyTorch C++ API (LibTorch), TensorFlow C++ API, Caffe, Microsoft Cognitive Toolkit (CNTK)

- General machine learning: mlpack, Dlib, Shark, Shogun

- Inference and deployment: ONNX Runtime, TensorRT

- Hardware acceleration and parallel computing: CUDA, OpenCL

- Math: Eigen, Armadillo

- Real-time image and video processing: OpenCV

Common AI use cases

C++ frequently appears in systems that require high performance or low latency:

- robotics platforms

- autonomous systems

- real-time computer vision

- embedded AI applications

- high-performance inference engines

Because of these strengths, C++ often powers the core computational layers of modern AI frameworks and infrastructure.

Java

Java is a heavyweight player in enterprise-scale AI systems, Big Data integration, and Android AI development. It’s also the go-to when you need AI to run within a massive, existing server infrastructure (like a bank or an e-commerce platform).

The language’s stability, scalability, and mature tooling make it extremely attractive for long-running production applications.

Popular Java frameworks and libraries for AI/ML

Java’s machine learning ecosystem includes several established frameworks and data platforms:

- Deep learning and neural networks: Deeplearning4j (DL4J), DJL (Deep Java Library), Neuroph

- Machine learning and data mining: Weka, MOA (Massive Online Analysis), Apache Mahout, ELKI

- Natural language processing (NLP): Stanford CoreNLP, Apache OpenNLP,

- Foundational math and Big Data: ND4J (N-Dimensional Arrays for Java), Apache Spark (MLlib)

Common AI use cases for Java

Java is commonly used in applications such as:

- fraud detection systems

- financial risk modeling

- recommendation platforms

- enterprise analytics systems

- large-scale data processing pipelines

For organizations with established JVM infrastructure, Java can provide a practical path for bringing AI capabilities into existing production environments.

Rust

Some people still view Rust as the “new kid on the block” for AI, but it is gaining massive traction. The language offers C++ speeds with memory safety and a much more modern developer experience.

In the AI world, Rust primarily powers high-performance model inference and data engine building. It’s also increasingly used as a safe alternative to C++ for the “heavy lifting” behind Python libraries.

Popular Rust frameworks and libraries for AI/ML

Rust’s AI ecosystem is smaller than those of Python or Java — but it continues to grow:

- Deep learning and neural networks: Burn, Candle, tch-rs, DFDX

- General machine learning: Linfa, SmartCore

- Data engines and infrastructure: Polars, Apache Arrow (DataFusion), ndarray

- Generative AI and agents: Rig, Llama-cpp-rs:

Common AI use cases

Rust is often used in infrastructure surrounding AI systems:

- high-performance inference services

- distributed data processing tools

- AI platform infrastructure

- real-time data pipelines

As the ecosystem continues to mature, Rust is becoming increasingly attractive to developers building modern infrastructure for AI platforms.

Scala

Scala is the “big data” sibling of Java, prized in AI for its functional programming strengths and its role as the native language for several massive data processing engines. It is the top choice when AI needs to scale across thousands of servers.

Because Scala runs on the Java Virtual Machine, it integrates closely with many enterprise data platforms and big data technologies.

Popular Scala frameworks and libraries for AI/ML

Scala frequently appears alongside tools designed for large-scale data processing and distributed analytics, including:

- General machine learning (batch and streaming): Apache Spark (MLlib), Flink ML

- Deep learning and neural networks: BigDL, Deeplearning4j (DL4J), ScalNet

- Natural language processing: Spark NLP, Epic

- Foundational math and data science: Breeze, Algebird, Spire

Common AI use cases

Scala is often used in:

- large-scale data pipelines

- distributed machine learning workflows

- big data analytics environments

- AI systems built on Spark-based infrastructure

As a result, Scala often plays an important role in data-intensive AI environments built on distributed computing frameworks.

Go

Go (Golang) is highly valued for AI deployment and infrastructure because of its incredible concurrency (goroutines) and its ability to compile to a single, fast binary. It is often used to build the “plumbing” for AI services or to run efficient model inference.

Popular Go frameworks, libraries, and other technologies

While Go is less commonly used for model training, it supports a wide range of tools relevant to AI systems:

- Deep learning and neural network inference: Gorgonia, Go-onnxruntime, Go-tensorflow

- General machine learning: Golearn, Goml, Evo

- Foundational math and data manipulation: Gonum, Gota

- Natural language processing and AI agents: Prose, LangChainGo

- AI infrastructure: LocalAI

Common AI use cases

Go is frequently used in:

- AI microservices

- distributed data pipelines

- scalable backend services

- infrastructure for AI deployment platforms

Because of these strengths, Go often appears in the infrastructure layer that supports AI systems in production.

JavaScript

JavaScript has carved out a unique niche in AI, specifically for web-based AI, edge computing, and full-stack integration. It allows developers to run models directly in the browser, reducing server costs and improving privacy by keeping data on the user’s device.

Popular JavaScript frameworks, libraries, and other technologies

Often paired with Node.js for server-side development, JavaScript is widely used to bring AI models into web applications and real-time interactive systems. It also powers AI/ML workflows thanks to an extensive ecosystem of libraries and frameworks:

- Deep learning and core frameworks: TensorFlow.js, Transformers.js, ONNX Runtime Web, Brain.js

- Generative AI & LLM orchestration: LangChain.js, Vercel AI SDK, Ollama-js

- Natural language and data processing: Natural, Danfo.js, Compromise

- Computer vision: MediaPipe

Common AI use cases

Developers typically leverage JavaScript for applications that require immediate deployment or interaction in a browser or front-end environment, such as:

- Real-time recommendation systems on websites

- Browser-based image and text processing

- Integrating AI-powered features into front-end applications

Because of its accessibility and integration capabilities, JavaScript often serves as the bridge between AI models and end-user-facing applications, complementing backend or performance-heavy languages like Python, C++, and Rust.

R

R is a programming language designed specifically for statistical computing and data analysis. While developers favor Python for general-purpose AI, R remains the leader when the focus is on statistical rigor, complex data modeling, and “explainable” AI.

It remains widely used among statisticians, researchers, and data analysts working with complex datasets.

Popular R frameworks and libraries for AI/ML

R’s ecosystem emphasizes statistical modeling, data exploration, and visualization, making it particularly useful in research-driven environments.

- General machine learning and unified frameworks: caret, mlr3, tidymodels

- Deep learning and neural networks: TensorFlow, Keras, torch for R, H2O

- Specialized algorithms: e1071, XGBoost R, randomForest

- Natural language processing: quanteda, tidytext, tm (Text Mining Package)

- Data processing and visualization: Tidyverse, ggplot2, Shiny

Common AI use cases

R is commonly used for:

- statistical machine learning

- data exploration and visualization

- predictive modeling

- research-driven data science workflows

Because of its statistical strengths, R remains an important tool for data analysis and modeling in research-focused environments.

Programming language popularity among developers (Stack Overflow Developer Survey 2025)

Technical capabilities are only one factor when choosing a programming language for AI development. In practice, engineering leaders also need to consider the size of the developer community and the long-term growth of the ecosystem.

The Stack Overflow Developer Survey offers a useful snapshot of how widely different languages are used and how developers feel about them. These signals can help organizations estimate hiring difficulty, training needs, and long-term adoption trends.

Programming language popularity among developers

| Language | Overall usage | Desired | Admired |

|---|---|---|---|

| JavaScript | 66% | 33.5% | 46.8% |

| Python | 57.9% | 39.3% | 56.4% |

| Java | 29.4% | 15.8% | 41.8% |

| C++ | 23.5% | 16.7% | 46.6% |

| Go | 16.4% | 23.4% | 56.5% |

| Rust | 14.8% | 29.2% | 72.4% |

| R | 4.9% | 4.2% | 39.6% |

| Scala | 2.6% | 3.0% | 39.4% |

Four patterns stand out from this data:

- Python dominates overall usage, reflecting its central role in data science and machine learning workflows. This large talent pool is one reason many organizations adopt Python when launching new AI initiatives.

- Rust stands out with the highest admiration score, indicating strong satisfaction among developers who already use it. Although the ecosystem is smaller, enthusiasm around the language continues to grow.

- Go shows a relatively high “desired” percentage, which aligns with its expanding role in cloud infrastructure and distributed systems that increasingly support AI platforms.

- JavaScript’s popularity largely comes from its use outside of AI/ML: the language has strong traction in front-end, backend (Node.js), and mobile development (React Native).

For engineering leaders, these signals reinforce an important reality: selecting a language for AI development is not only a technical decision. It also affects hiring strategy, team productivity, and long-term maintainability.

Platforms and tools that power AI development

Programming languages, libraries, and frameworks are the foundation of AI systems, but much of the work happens through specialized platforms and tools. These technologies provide pretrained models, managed infrastructure, and development environments that help teams move from experiments to production systems more quickly.

Below are several categories of tools that play a major role in modern AI development.

AI model hubs and open-source ecosystems

Open-source model hubs allow engineers to discover and deploy pretrained models, accelerating development initiatives.

One of the most widely used ecosystems is Hugging Face, which hosts thousands of models and provides tools for fine-tuning and deployment. Its libraries integrate closely with frameworks such as PyTorch and TensorFlow. Popular tools in this ecosystem include:

- Hugging Face Transformers for working with pretrained language and vision models

- Hugging Face Datasets for accessing and processing machine learning datasets

- Hugging Face Hub for sharing models and collaborating across research and engineering teams

Cloud AI development platforms

Cloud providers offer integrated environments that support the entire AI lifecycle — from data preparation to model training and deployment.

Examples include:

- Amazon SageMaker and Bedrock

- Google Cloud Vertex AI

- Microsoft Azure Machine Learning

These platforms provide managed infrastructure, experiment tracking, and tools for deploying models into production systems.

Generative AI APIs and foundation model services

Generative AI has introduced a new category of development tools: APIs that provide direct access to large language models and multimodal AI systems.

Developers can integrate these capabilities using services such as OpenAI API, Claude, DeepSeek, Mistral AI, and more.

Platforms like Amazon Bedrock also allow organizations to access and customize multiple foundation models within a managed environment.

Development environments and experimentation tools

AI teams often rely on interactive environments to explore datasets and train models.

One of the most widely used tools is Jupyter Notebook, which allows developers to combine code, visualizations, and documentation in a single workspace.

Tools such as Streamlit and FastAPI are also commonly used to turn experimental models into lightweight applications or APIs.

Low-code and no-code AI platforms

Some platforms allow teams to build machine learning models using graphical interfaces or automated pipelines. Examples include:

- Amazon SageMaker Studio Lab

- Google Cloud Vertex AI AutoML tools

- Microsoft Azure Machine Learning automated ML features

These tools can help organizations prototype AI solutions quickly or enable analysts and domain experts to experiment with machine learning models.

Niche AI programming languages and industry-specific use cases

While mainstream languages such as Python or Java are effectively industry-agnostic, some industries require more specialized AI development tools. In research-heavy or highly technical fields, programming language choices are often shaped by statistical workflows, numerical computing needs, or domain-specific modeling requirements.

Julia and R: scientific research, healthcare, and statistical modeling

Research-driven industries often rely on programming languages designed for statistical analysis and scientific computing.

For example, R remains widely used in areas such as clinical research, epidemiology, and bioinformatics because of its extensive ecosystem of statistical libraries and visualization tools. Researchers frequently use it to analyze experimental data, build predictive models, and interpret complex datasets.

Similarly, Julia has gained traction in scientific computing environments that require both high-level programming and strong numerical performance. This makes it useful for workloads such as pharmaceutical modeling, computational biology, and large-scale scientific simulations.

Lisp and Prolog: symbolic AI and rule-based systems

Not all AI systems rely on modern machine learning models. Some applications still depend on symbolic reasoning, expert systems, and rule-based logic.

Languages such as Lisp and Prolog have long histories in these areas. They are particularly well-suited to representing logical rules, knowledge bases, and reasoning processes.

Although these languages are less common in modern machine learning pipelines, there are prominent examples of companies deploying them to commercial cloud infrastructure. One such example is the AI-enabled writing assistant Grammarly — its core grammar engine has been written in Common Lisp.

As AI systems evolve, new programming languages are also emerging that aim to combine the performance of systems programming languages with the productivity of high-level tools. The next section explores several languages that may shape the future of AI development.

The future of AI programming languages (2025–2030)

The programming languages that dominate AI development today may not remain the same over the next decade. As models grow larger and infrastructure becomes more complex, engineering teams are increasingly looking for tools that combine Python-level developer productivity with the performance of systems programming languages.

Practical pressures drive this shift. Training and running modern AI models can require massive computing resources, specialized hardware, and complex distributed systems. As a result, developers are experimenting with languages designed to improve performance, simplify large-scale AI infrastructure, or introduce new programming paradigms for building intelligent systems.

Several emerging languages and approaches are trying to address these challenges.

Mojo

One of the most closely watched new AI programming languages is Mojo, developed by Chris Lattner, the creator of Swift and a key architect behind LLVM.

Mojo was designed specifically for high-performance AI and machine learning workloads. The language combines familiar Python-style syntax with low-level capabilities typically associated with languages like C++ or Rust. For AI engineers, the goal is straightforward: allow developers to write code that looks like Python while still achieving performance that’s closer to compiled systems languages.

Potential advantages of Mojo include:

- strong support for GPU and accelerator programming

- fine-grained control over memory and hardware resources

- compatibility with existing Python ecosystems

If the language matures as expected, it could significantly simplify the process of building high-performance AI systems.

Haskell

Although not new, Haskell has gained attention in some AI and machine learning communities due to its functional programming model and emphasis on mathematical correctness.

Functional programming languages can be appealing for AI research because they encourage developers to express algorithms in a declarative and mathematically rigorous way. This approach can help reduce certain types of software errors and improve code reliability in complex systems.

However, Haskell’s steep learning curve and smaller developer community have limited its adoption in mainstream AI development.

Prolog and symbolic AI revival

Interest in symbolic reasoning and hybrid AI systems has also renewed attention around languages such as Prolog.

While modern AI development is heavily focused on deep learning, some researchers and companies are exploring ways to combine neural networks with symbolic reasoning systems. This approach — sometimes referred to as neuro-symbolic AI — could benefit from languages that naturally represent logical rules and knowledge graphs.

One potential advantage of this approach is improved reliability. By combining probabilistic models with explicit rules or knowledge graphs, neuro-symbolic systems may help reduce problems such as hallucinations in generative AI systems.

Although these approaches remain relatively niche, they may play a larger role in areas that require explainability, formal reasoning, or strict decision logic.

What this means for developers and technology leaders

For most organizations, Python and other mainstream languages will likely remain central to AI development for the foreseeable future.

However, the ecosystem around AI programming languages is evolving quickly. Over time, teams may increasingly adopt specialized languages for performance-critical workloads, while continuing to use high-level tools for experimentation and research.

For engineering leaders, this means staying aware of new developments — even if the technologies themselves are still maturing.

Conclusion: Key takeaways for AI development

Choosing the right programming languages, platforms, and tools is central to building effective AI systems — and the decisions you make can directly affect your team’s productivity, system performance, and ability to scale.

Some of the most important considerations include:

- Python remains the dominant language, but performance-critical and niche workloads may rely on languages like C++, Rust, Julia, or Lisp.

- Platform choice matters: frameworks, cloud services, and generative AI APIs can significantly accelerate development and deployment.

- Talent availability, performance, and ecosystem support remain the top considerations when selecting languages and tools.

- Emerging languages and hybrid paradigms, such as Mojo and neuro-symbolic approaches, could reshape AI development over the next 5 years.

Taking these factors into account can help ensure your AI initiatives are built on a foundation that balances speed, reliability, and scalability.

If you’re exploring AI initiatives in your organization, our AI Studio can help you identify the best language and technology stack for your industry and product type. Our remote experts are always ready to assist with bringing AI projects to life, leveraging experience across Agentic AI and Generative AI systems, fraud detection solutions used at Nasdaq and the NYSE, predictive maintenance systems adopted by Fortune 50 automotives, and much more.

Frequently Asked Questions

Q: Which programming languages and platforms should my team use for AI projects?

A: Most AI initiatives rely on a combination of programming languages and platforms. Python is the primary language for experimentation, model training, and prototyping, while C++, Rust, or Java may be used for performance-critical production components. Frameworks, cloud platforms, and APIs — including Hugging Face libraries, Amazon SageMaker, Google Vertex AI, and generative AI APIs from OpenAI, Anthropic, or Mistral — can significantly accelerate development and simplify deployment. The right stack depends on your industry, product type, and integration requirements.

How much does an AI project cost?

Costs vary widely depending on the scope, team size, model complexity, infrastructure requirements, and integration needs. Small proof-of-concept projects may cost tens of thousands of dollars, while enterprise-scale AI systems — especially those leveraging generative AI or agentic architectures — can reach millions in development, cloud compute, and ongoing maintenance.

How do I hire reliable AI developers?

Start by defining the skills your project requires: programming languages, machine learning frameworks, and domain expertise. Evaluate candidates through portfolios, technical interviews, and small trial projects. You can also leverage remote AI development experts and specialized studios to supplement in-house teams, providing vetted talent and experience in diverse applications.

Can AI models run on laptops or phones?

Smaller models or optimized versions of larger models can run locally for inference on laptops, desktops, and mobile devices. Frameworks like OpenClaw allow developers to orchestrate AI agents on local machines or edge environments, enabling experimentation and lightweight deployments without cloud dependency. Large-scale models or high-throughput generative AI systems generally still require cloud infrastructure or GPU/TPU clusters for practical performance.

What machine learning languages do Big Tech companies use?

Different companies balance experimentation and production needs differently:

- Netflix, Spotify, and Instagram: Python for recommendation engines and content discovery

- Google: Python and C++ for research, Java for production systems

- Amazon: Python for data science and ML; Java and Go for backend integration; Rust for cloud AI/ML infrastructure development

- Facebook/Meta: Python for prototyping, C++ for performance-critical systems

![A smartphone and a tablet with monetization elements (banner ad and in-app purchase button) displayed on the screen]](https://agileengine.com/wp-content/uploads/2026/03/How-Do-Free-Apps-Make-Money_-Monetization-Explained.webp)